Building on the Fediverse: A First Experiment

In my last post, I explored the idea that AI and the fediverse together might offer a path back to a more open and machine-understandable web. Rather than leaving that as a purely conceptual discussion, I wanted to test the idea in a simple and concrete way.

So I started with a small experiment.

The idea

The goal was straightforward: take my Mastodon feed and treat it as raw data instead of as a user interface. Instead of thinking in terms of timelines, scrolling, or engagement, I wanted to see what it would look like to simply pull the data, inspect it, and work with it programmatically.

What I built

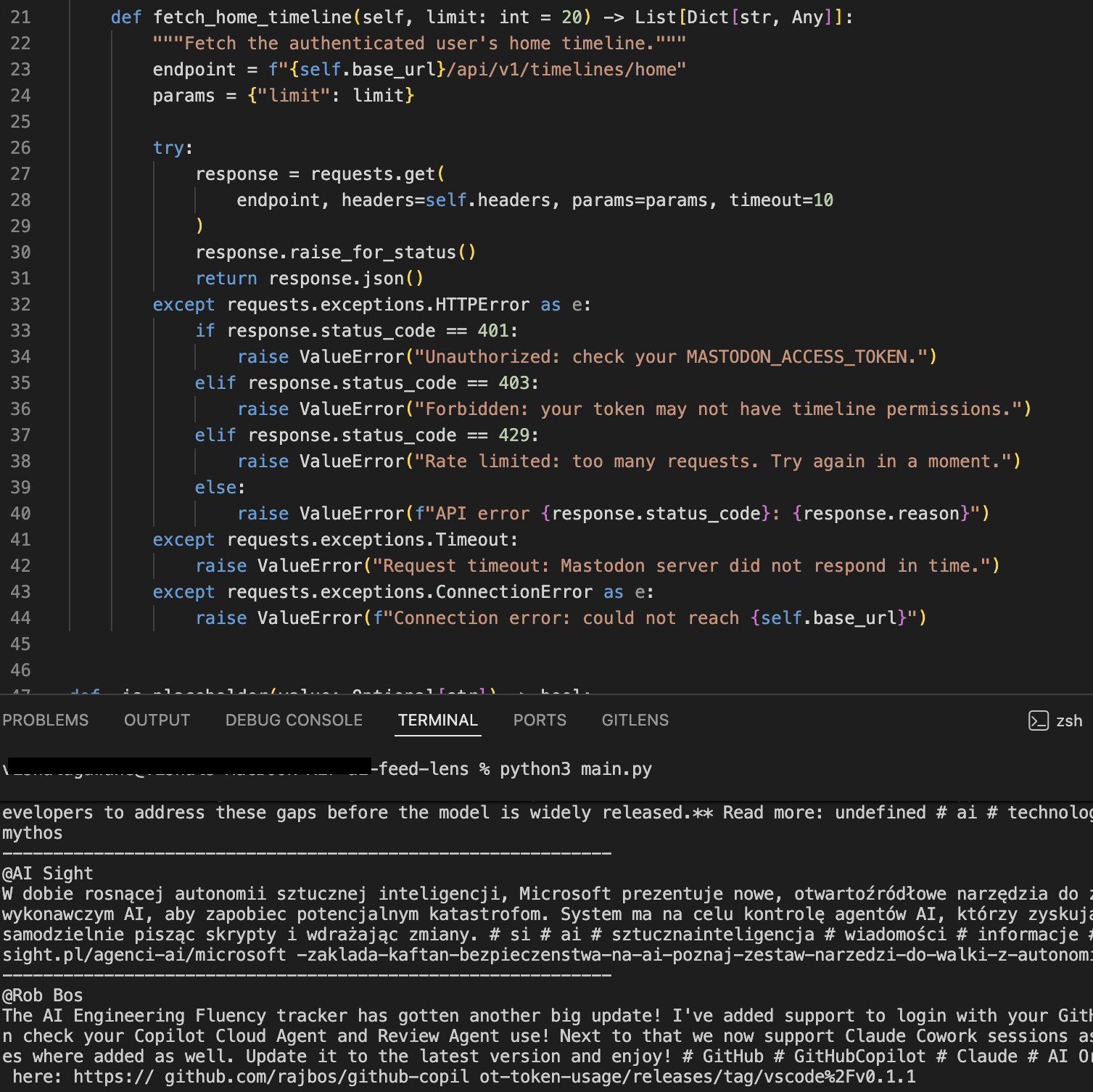

I wrote a small Python script that pulls my home timeline using the Mastodon API, cleans up the HTML content in each post, and prints it out as readable text.

Key references:

There’s no UI, no database, and no persistence layer—just a simple script that turns a feed into something I can directly examine.

Code (simplified)

posts = fetch_home_timeline()

for post in posts:

content = clean_html(post["content"])

print(content)

Repository:

What the output looks like

Here’s what the script looks like when running locally:

Seeing the feed in this form changes how you think about it. Instead of something you passively consume through an interface, it becomes something you can inspect, transform, and build on.

What felt different

Working with Mastodon in this way feels noticeably different from working with typical social platforms. The API is open and well-defined, the data is structured in a predictable way as a combination of JSON and HTML, and there’s no friction around accessing your own timeline.

Taken together, this makes it feel less like you’re interacting with a closed product and more like you’re working directly with the underlying system.

Connecting back to the bigger idea

In my previous post, I talked about the semantic web and the idea of making the web machine-readable by explicitly adding structure. What surprised me here is that Mastodon data already moves part of the way in that direction.

It’s not fully semantic in the traditional sense, but it is accessible, consistent, and easy to process. That makes it a practical starting point for layering additional meaning on top using AI.

What’s next

The next step is to introduce a semantic layer using AI, starting with things like generating summaries, extracting topics, and identifying entities and relationships across posts.

The goal isn’t to build a product right away, but to explore what happens when you take open, federated data and apply systems that can infer structure from it.

Closing

This is still a small experiment, but it already feels like a useful way to explore a bigger question: what becomes possible when you combine open protocols like ActivityPub with systems that can understand and organize information automatically?

Code: